Illustration of the DeepETA model structure. Here is the complete DeepETA model to answer this question. Now, there is only one challenge left to cover, and by far, the most interesting is how they made it more general. Discretization is regularly used in production to speed up computation as the speed it gives clearly outweighs the error the duplicate values may bring. Meaning that they take continuous values and make them much easier to compute by clustering similar values together. Instead, it scales with dimensions, something they can control that is much smaller.Īnother great improvement in speed is the discretization of inputs.

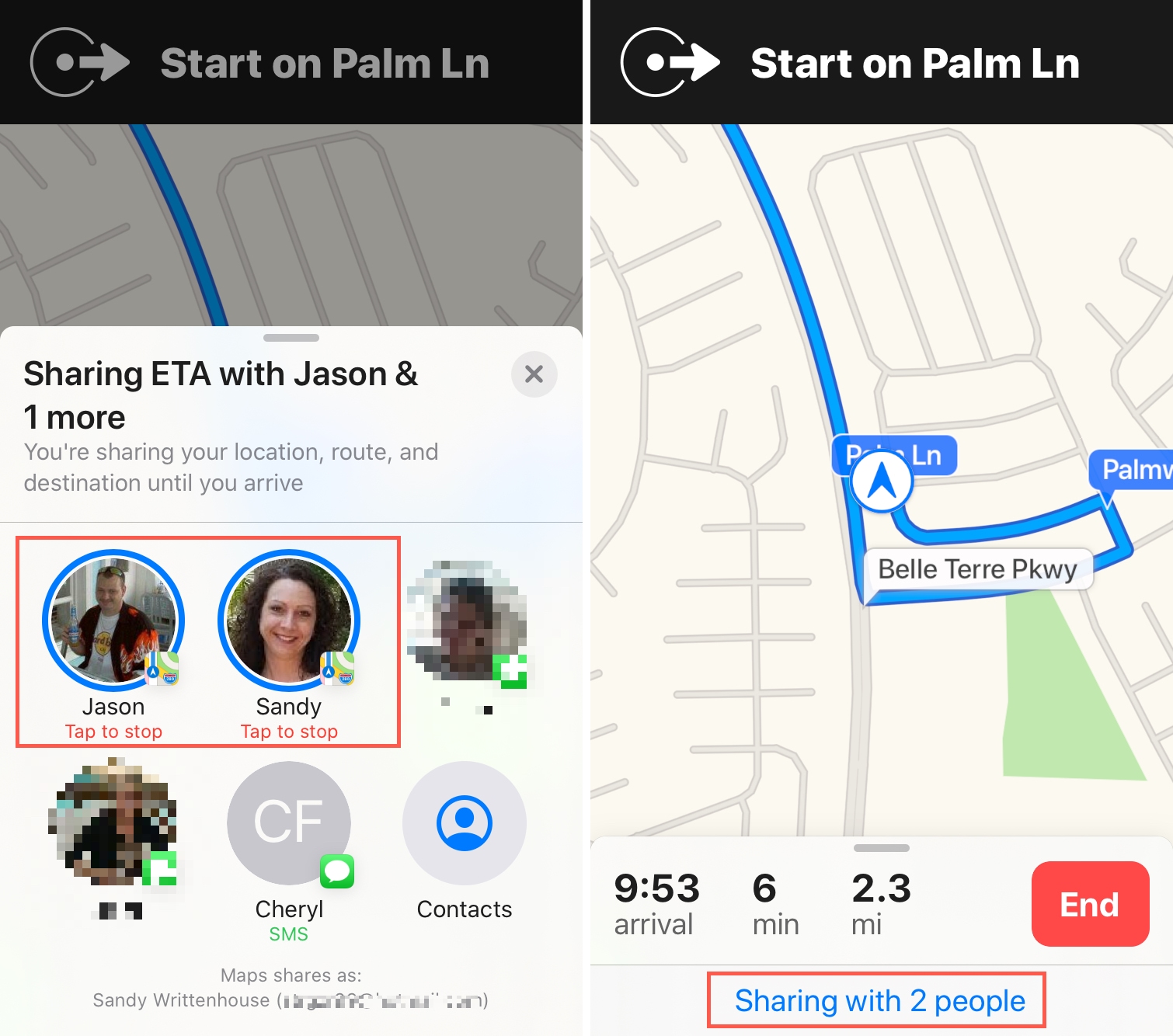

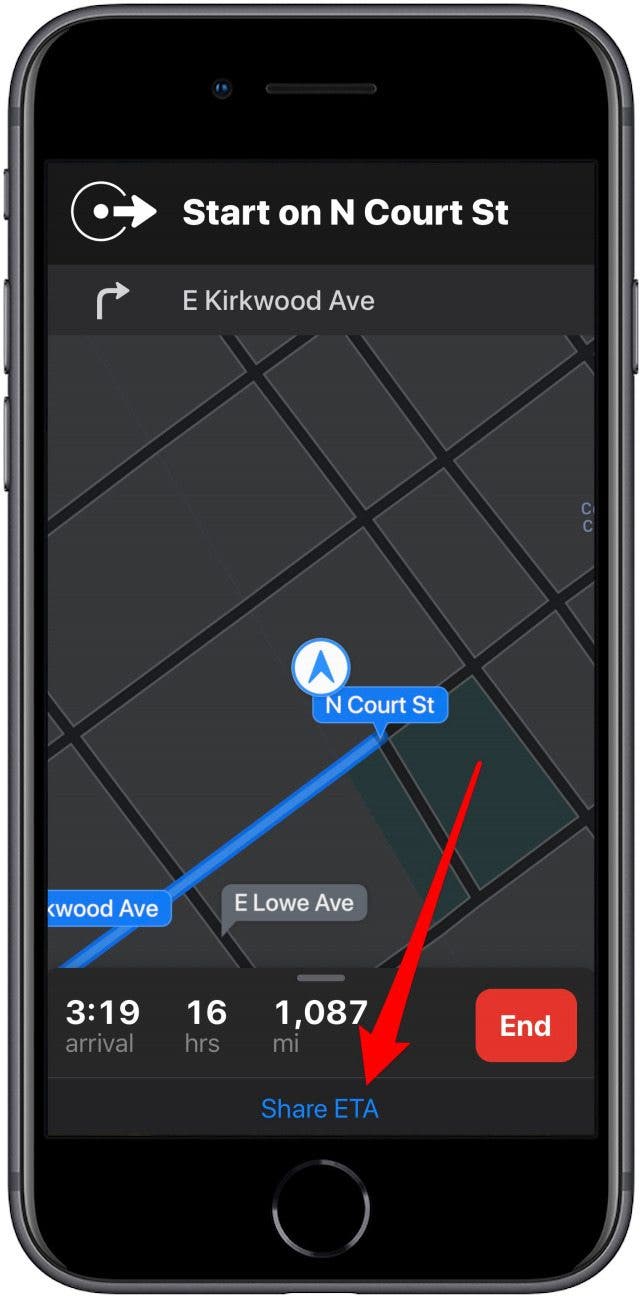

This means that if the input is long, transformers will be extremely slow, and this is often the case for them with as much information and routing data. The change with the biggest impact was to instead use a linear transformer, which scales with the dimension of the input instead of the input’s length. They’ve tried it, and it was too slow, so they made some changes. First, you must be thinking “but transformers are huge and slow models how can it be of lower latency than XGBoost?!” I’ve already covered transformers numerous times on my channel, so I won’t go into how it works in this article, but I still wanted to highlight a few specific features for this one. You guessed it: a transformer! Are you surprised? I’m definitely not.Īnd this directly answers the first challenge, which was to make the model more accurate than XGBoost. It also takes more information about real-time activities like traffic, weather, or even the nature of the request, like delivery or a rideshare pickup.Īll this extra information is necessary to improve from the shortest-path algorithms we mentioned that are highly efficient but far from intelligent or real-world proof.Īnd what does this model use as an architecture? Then, the model takes in these transformed features with the spatial origin and destination and time of the request as a temporal feature. This pre-processing module starts by taking map data and real-time traffic measurements to produce an initial estimated time of arrival for any new customer request. This makes it much easier for the model as it can directly focus on optimized data with much less noise and far smaller inputs, a first step in optimizing for latency issues. There’s a whole pre-processing system to make this data more digestible for the model. Image from Uber’s blog.ĭeepETA is really powerful and efficient because it doesn’t simply take data and generate a prediction. Hybrid approach of ETA post-processing using ML models. A deep learning model that improved upon XGBoost for all of those. All orthogonal challenges that are complex to solve, even for machine learning or AI. They wanted something faster, more accurate, and more general to be used for drivers, riders, and food delivery.

XGBoost is extremely powerful and used in many applications but was limited in Uber’s case as the more it grew, the more latency it had. Previous arrival time prediction tools, including Uber, were built with what we call shortest-path algorithms, which are not well suited for real-world predictions since they do not consider real-time signals.įor several years Uber used XGBoost, a well-known gradient-boosted decision tree machine learning library. Many different algorithms exist to estimate travel on such road networks, but I don’t think any are as optimized as Uber’s. Used both for Uber and Uber Eats, DeepETA can magically organize everything in the background so that riders, drivers, and food are fluently going from point a to point b in time as efficiently as possible. DeepETA is Uber’s most advanced algorithm for estimating arrival times using deep learning. We will answer these questions in this article with their arrival time prediction algorithm: DeepETA. How can Uber deliver food and always arrive on time or a few minutes before? How do they match riders to drivers so that you can *always* find a Uber? All that while also managing all the drivers.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed